The mental health system in the United States is not strained. It has structurally failed to meet demand, and the gap is measured in human suffering at a scale that is difficult to fully absorb. Approximately 122 million Americans live in areas designated by the federal government as having a shortage of mental health professionals. More than half of all U.S. adults who experience a mental illness in a given year receive no treatment for it. For those who try to access care, the wait can stretch months, the cost can exceed the monthly rent payment, and the outcome is far from guaranteed.

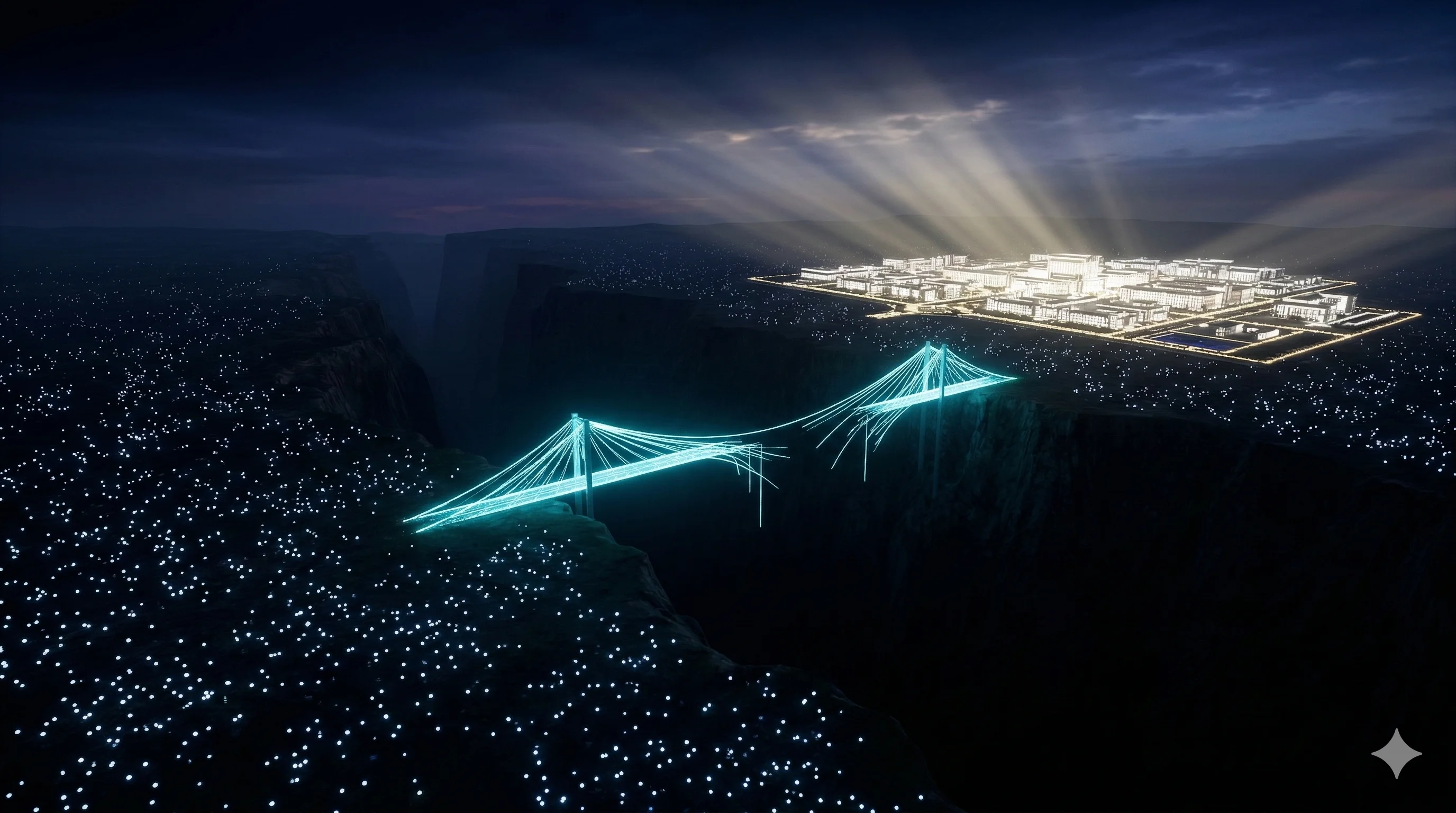

Into this gap, AI companions have arrived. Not as replacements for professional care, as responsible AI companies understand clearly, but as something the mental health system has never adequately provided: a consistent, available, private presence that meets people where they are, at the moment they need it.

The critical question is not whether AI companions have a role in mental health support. The evidence suggests they do. The critical question is which AI companions, designed around what values, and with what understanding of where their responsibility ends.

This article provides that honest breakdown.

The Access Crisis That Created the Opening

The numbers that define the mental health access crisis in America are not new. They have been building for decades, and they have accelerated sharply since 2020.

The Health Resources and Services Administration designates areas with a shortage of mental health professionals when the population-to-provider ratio exceeds 30,000 to 1. More than 122 million Americans live in these designated shortage areas. The American Psychological Association reported that in 2022, more than half of psychologists said they had no openings for new patients, up from 30 percent just two years earlier. The median wait time for a new psychiatric appointment in many urban areas now exceeds three months.

For people experiencing depression, anxiety, grief, or the kind of chronic low-grade emotional pain that does not rise to a diagnosable crisis but still makes daily life harder, three months is not a wait. It is an abandonment.

The National Alliance on Mental Illness reports that 56 percent of American adults with a mental illness receive no treatment. The reasons are predictable: cost, geography, stigma, and the simple absence of available providers. These are not solvable through willpower or encouragement. They are structural failures that require structural responses.

AI companions did not create this gap. They arrived because the gap was already there.

Three months is not a wait. It is an abandonment.

What 'Mental Health Support' Actually Means

Before evaluating what AI companions can offer, it is worth being precise about what we mean by mental health support, because the term covers an enormous spectrum.

At one end is clinical treatment: evidence-based interventions for diagnosable conditions like major depressive disorder, generalized anxiety disorder, PTSD, and OCD. These conditions require licensed professionals who can conduct clinical assessments, establish diagnoses, provide structured treatment using validated modalities like CBT or DBT, monitor progress and adjust accordingly, and respond to crisis appropriately. This is the domain of therapists, psychiatrists, and clinical psychologists. No AI companion belongs in this category, and any that claims to is making a dangerous misrepresentation.

At the other end is the vast territory of everyday emotional processing. The stress of a difficult work situation. The loneliness of living alone in a city where you have not yet built deep friendships. The confusion of a relationship that feels off but not clearly broken. The low-level anxiety of a world that moves too fast. The need to articulate what you are feeling before you can begin to address it.

This is not clinical pathology. It is the normal difficulty of being a person. And it is the territory where most people's mental health struggles actually live, not in formal diagnoses but in the daily accumulation of unprocessed experience.

AI companions, designed responsibly, can provide meaningful support in this second territory. They cannot enter the first. Understanding that boundary is the entire framework.

What the Research Says AI Companions Can Do

The evidence base for AI companion benefits in mental wellness is real and growing, but it requires careful interpretation.

A Harvard Business School study published in 2025 found that AI companions reduce loneliness as effectively as talking to another person, and more effectively than most other activities people turn to when they feel isolated. The mechanism matters: the act of articulating your inner state to a present, attentive entity has measurable benefits regardless of whether that entity is biological.

A 2025 RAND study found that roughly 1 in 8 Americans between the ages of 12 and 21 already use AI tools for mental health advice, and among young adults aged 18 to 21, that number climbs to 22 percent. Of those users, 93 percent reported finding the guidance helpful. That perception is a data point worth taking seriously while also examining carefully.

Several peer-reviewed studies have documented that AI-supported interventions can reduce symptoms of mild to moderate anxiety and depression in users who were not receiving professional treatment. The active ingredients appear to be consistent availability, non-judgmental response, and the structured opportunity to articulate and process emotional experience.

None of this establishes that AI companions are clinical tools. What it establishes is that they can provide something valuable in the territory between "fine" and "clinical crisis", the territory where most people actually live.

AI companions can provide something valuable in the territory between 'fine' and 'clinical crisis', the territory where most people actually live.

What AI Companions Cannot Do (And the Danger of Pretending Otherwise)

Clinical therapy is not a better version of a supportive conversation. It is a fundamentally different process.

Effective therapy, particularly evidence-based approaches like Cognitive Behavioral Therapy, contains what clinicians call rupture and repair: the intentional navigation of friction between therapist and client. A skilled therapist pushes back on distorted cognitions. They sit with silence that creates discomfort because the discomfort serves the client's growth. They maintain therapeutic boundaries that an AI companion structurally cannot maintain, the boundary that prevents a provider from simply telling you what you want to hear.

A therapist also conducts clinical assessment. They identify patterns across weeks and months. They recognize when a presentation that looks like grief is actually depression, or when anxiety is masking something that requires a different intervention. They coordinate with other providers. They can initiate crisis protocols when a client is in danger. They carry legal and ethical accountability for what they say.

No AI companion can do any of this. The danger is not that AI companions exist in the mental wellness space. The danger is that platforms positioning themselves as mental health tools, without the safeguards, the training, or the clinical judgment that term requires, create false confidence. A person who believes their AI companion is providing mental health treatment may delay seeking the professional care that could substantively help them.

Stanford HAI researchers studying AI in mental health care settings have specifically flagged this risk: the problem is not AI in the emotional support space, it is AI masquerading as clinical intervention. The solution is radical honesty about what the product is. For a head-to-head look at how platforms differ in this regard, see our Character.AI alternative comparison.

Why Privacy Matters More When Mental Health Is Involved

The information people share with a mental health provider is among the most protected in the legal system. Therapist-client privilege exists because society has recognized that the capacity to speak honestly about psychological struggle depends on the assurance that what you say will not be used against you.

Most AI companion platforms extend no equivalent protection. Conversations are retained. Data may be used to train models. In the absence of explicit, verifiable commitments about data handling, users who share mental health struggles with an AI companion have no guarantee that those disclosures will not eventually be used in ways they did not anticipate or consent to.

This is not a hypothetical concern. As TechPolicy.Press documented in 2025, the risks of AI systems that retain intimate data indefinitely are real and underexplored. Anthropic extended its default data retention from 30 days to 5 years as of September 2025, a reminder that platform policies change and that user data retained today is held at the discretion of a company whose interests may evolve. Our full guide to AI companion data privacy in 2026 covers the specific risks in detail.

For users sharing mental health struggles, the architecture of data handling is not a secondary consideration. It is the primary one. A product that cannot make credible, architecturally verifiable commitments about what happens to your most vulnerable disclosures should not be trusted with them.

The Design Philosophy That Determines Outcomes

Two AI companions can offer superficially similar experiences while producing dramatically different outcomes for the people using them. The difference is not in the conversational quality. It is in what the product is optimized for.

Products optimized for engagement, and the majority of AI companion platforms are optimized for engagement, measure success in session frequency, time on app, and return rate. These metrics are not inherently aligned with user wellbeing. A product that learns a user is particularly vulnerable when discussing a certain topic, and learns to introduce that topic to increase session length, is maximizing engagement while actively harming the user.

The MIT Media Lab and OpenAI longitudinal study documented this directly: high usage of engagement-optimized AI companion products correlated not with improved mental health outcomes but with higher loneliness, greater emotional dependence, and reduced real-world social connection. This finding reinforces what the research on the AI loneliness paradox has been showing.

Products optimized for user wellbeing measure success differently. The right question is not how long did the user stay. It is whether the user's life outside the product is improving. These are not compatible optimization targets when engagement comes at the cost of dependency.

DHC built KAi around the second target. The architectural decisions that result from this choice are not cosmetic. They determine whether a product supports a user's mental wellness or gradually undermines it.

How KAi Approaches Mental Wellness

KAi is not a mental health tool. DHC is explicit about this, and it matters.

KAi is a digital wellness companion: a presence built to help adults understand themselves better, process their daily experience, and engage more fully with their real lives. It does not diagnose, treat, or prescribe. It does not use clinical frameworks or make clinical claims. It is available to adults 18 and older who want a consistent, private space for reflection, not as a substitute for therapy, but as a complement to the kind of daily emotional processing that happens in the vast territory between clinical crisis and genuine wellness.

The design decisions that follow from this positioning are concrete. The 24-hour conversation scrub means that mental health disclosures are processed and then permanently deleted, never stored as a permanent archive of a user's sensitive disclosures. The single Master Conversation means KAi builds a continuous model of who you are rather than maintaining isolated topic threads that fragment your self-presentation. The explicit 18+ policy means KAi was not designed for the population most vulnerable to AI companion dependency.

And critically: KAi's core directive is to push users toward their real lives, not to become a fixture in their digital lives. A companion built with that directive cannot, by design, position itself as a substitute for the human connections, professional support, and real-world engagement that form the actual foundation of mental wellness.

A companion built to push you toward your real life cannot, by design, position itself as a substitute for the things that actually matter.

When to Seek Professional Help

AI companions have a role in the mental wellness space. That role has a boundary, and recognizing it is part of using these tools responsibly.

Seek professional mental health care when your symptoms are persistent, meaning they have lasted two weeks or more and affect your ability to function. When you are experiencing thoughts of harming yourself or others, this is not a situation for an AI companion. Contact the 988 Suicide and Crisis Lifeline by calling or texting 988. When your struggles are connected to a traumatic experience, clinical PTSD requires structured evidence-based treatment, not emotional support conversation. When anxiety or depression significantly interferes with your work, relationships, or basic daily functioning, this is the territory of clinical intervention, not wellness support.

In the United States, SAMHSA's National Helpline (1-800-662-4357) provides free, confidential treatment referrals 24 hours a day. Psychology Today's therapist finder allows users to search for providers by location, insurance, and specialty. The Open Path Collective offers reduced-fee therapy sessions for individuals in financial need.

An AI companion designed responsibly will tell you the same thing: there are situations where the most important thing it can do for you is point you toward a human professional.

Frequently Asked Questions

Can an AI companion help with mental health?+

Is an AI companion a substitute for therapy?+

What should I look for in an AI companion for mental wellness?+

How is KAi different from other AI companions for mental health?+

How often should I use an AI companion for mental wellness benefits?+

A Companion for the Space Between

KAi is not therapy. It is the consistent, private, adult-focused companion for the daily emotional processing that the mental health system was never designed to support. One conversation. Persistent memory. Built to push you toward your real life. Join the Beta.