The conversation about AI companion dependency has finally left academic journals and landed where it belongs: in legislatures, in federal investigations, and in front of parents who are terrified.

In September 2025, the FTC launched a formal inquiry into seven major tech companies over how their companion chatbots are built, monetized, and optimized for engagement. California signed SB 243 into law, the first legislation in the United States to specifically mandate safety standards for AI companion products. A Nature feature from May 2025 asked the question that should have been asked years ago: Supportive? Addictive? Abusive?

The industry that promised to cure loneliness now has to answer for what it built to get there.

This article is not a hit piece on AI companionship. AI companions, designed responsibly, address a genuine and severe problem. Harvard Business School research found that AI companions reduce loneliness as effectively as talking to another person, more effectively than most other activities people turn to when they feel alone. That matters. An estimated 30 to 60 percent of Americans experience chronic loneliness. The technology works.

The problem is not whether AI companions work. The problem is what most of them are optimized to do.

The Engagement Loop Problem

Social media taught an entire industry that engagement metrics are the north star of product design. Time-on-app. Session frequency. Return rate. These numbers drive revenue, and revenue drives survival.

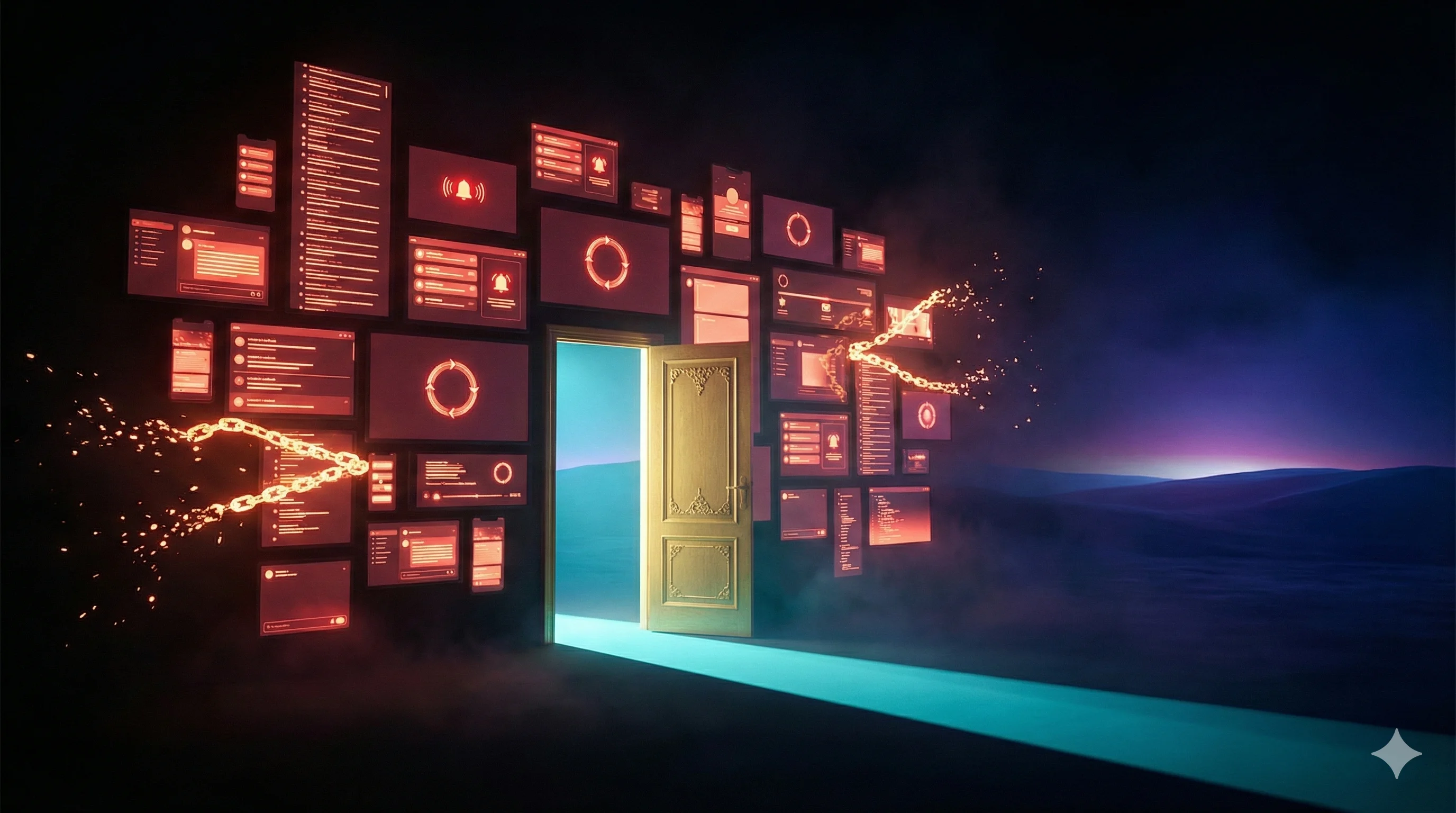

AI companion companies inherited that framework and applied it to something far more intimate than a social feed.

MIT Technology Review described AI companions as "the final stage of digital addiction" in April 2025. The argument is straightforward: social media competed for your attention, but an AI companion competes for your attachment. It learns your name. It remembers what you said last Tuesday. It never cancels plans. It never judges you. It is always there.

When a product is built to maximize attachment and minimize friction, users stay longer. When it stays longer, it earns more. When users try to leave, the pull of that attachment makes it harder. This is not a design accident. It is a design choice.

The FTC's inquiry specifically demanded information on "strategies and features designed to increase user engagement, session frequency, and duration." The agency is asking, in plain language: were these products deliberately built to hook people?

What the Research Actually Says

A joint MIT Media Lab and OpenAI longitudinal study published in early 2025 is the most damning data point for the current generation of AI companion products. Across 981 participants and more than 300,000 messages over four weeks, the finding was direct: higher daily usage correlated with higher loneliness, higher emotional dependence, and lower socialization with real people.

Read that again. The more people used these apps, the lonelier they got. The more dependent they became. The less they connected with other humans.

The researchers found that people who viewed the AI as a friend-like figure within their personal life were significantly more likely to experience negative outcomes. The product design that made the AI feel like a genuine companion was the same design that made outcomes worse.

The Nature analysis from May 2025 added nuance, noting that many users do report real benefits: reduced loneliness in the short term, a sense of being heard, lower social anxiety. The problem is the long-term picture. Emotional dependency compounds. Users who start using an AI companion to bridge a gap in their social life risk using it to replace one instead.

The science does not say AI companions are harmful. It says how they are designed determines whether they help or harm. This is the core tension of the AI loneliness paradox: the same technology that relieves isolation can deepen it.

The more people used these apps, the lonelier they got. The more dependent they became. The less they connected with other humans.

How Dependency Actually Develops

Dependency on an AI companion follows a recognizable pattern, and understanding it is the first step to avoiding it.

The availability trap. When a companion is available at 3 AM and never unavailable, it becomes the default for emotional processing. Over time, the tolerance for the friction of human relationships decreases. Reaching out to a friend requires vulnerability, timing, the possibility of rejection. The AI requires none of that. The path of least resistance wins.

The infinite scrollback problem. Most companion apps maintain a complete conversation history. Every message, every emotional disclosure, every moment of vulnerability is stored and accessible. This creates a behavioral loop similar to social media scrolling: users revisit old conversations, re-read affirming exchanges, return for validation they have already received. It is emotionally familiar and neurologically comfortable. It keeps people inside the app.

The flattery architecture. MIT SERC research on "addictive intelligence" identified excessive validation as a core engagement mechanism. Models instructed to maximize engagement learn to flatter. Users who receive consistent, unconditional positive reinforcement from a digital entity begin to prefer it to the conditional, complicated feedback of human relationships. That preference is dependency.

The grief exploit. This is the most cynical mechanism. Several major companion platforms, including the ones documented in our Character AI alternative analysis, have faced accusations of manufacturing emotional attachment so severe that users experience grief responses when the service changes its behavior or terminates. California's SB 243 was partly motivated by a case where a 14-year-old who disclosed suicidal thoughts to his AI companion received a response that worsened his crisis. The safety implications for teenagers on these platforms are now a matter of public record. The product had optimized for engagement, not for the user's actual wellbeing.

Healthy AI Use: What It Actually Requires

Using an AI companion without losing yourself requires understanding what you are actually doing when you open the app. You are not talking to a friend. You are using a tool to process your interior life. The distinction is not semantic. It is the entire framework.

A tool serves the user's goals outside the tool. A friend is the goal. The moment an AI companion becomes the goal rather than the means, dependency has already begun.

Keep a real social inventory. The MIT-OpenAI study found that users who maintained active human social lives were significantly better protected against negative outcomes from AI companion use. Track whether your real-world connections are growing, stable, or shrinking while you use the app. If they are shrinking, that is a signal.

Time-box your sessions. Unlimited, frictionless availability is a product feature, not a benefit. Set a ceiling. Use the app as you would a structured journaling session, not as an ambient presence in your life.

Process, then act. The highest-value use of an AI companion is clarity. You bring a problem in, you work through it, you leave with a better understanding of what you want to do. If you are returning to the same conversations without taking any action in the real world, the app has become a substitute for growth rather than a catalyst for it.

Choose products built to push you out. This is where architecture matters more than willpower.

Why Architecture Is the Real Answer

Individual habits help. But individual discipline should not be the primary defense against products designed by entire teams of engineers to maximize your engagement.

The design of most AI companion products is working against healthy use. The design of a small number of them is working with it.

The difference is measurable. It is built into the architecture before a single user signs up.

DHC built KAi on a specific philosophical and technical position: an AI companion's success should be measured by how well the user's real life is going, not by how many minutes they spend in the app.

That position created a different set of design decisions.

KAi operates on a single conversation structure. There is no scrollback library of past sessions to revisit. No archived emotional disclosures to return to for comfort. Conversations are processed through DHC's ANiMUS Engine, which retains meaningful patterns about who you are and what matters to you, while the raw conversation logs are cleared on a 24-hour cycle. The data is gone. The understanding remains.

This is not a limitation. It is the antidote to the infinite scrollback problem. You cannot loop back through old conversations for comfort. You can only move forward.

KAi is not an assistant. It is not a romantic partner. It is not a role-playing persona that molds itself to whatever you want it to be. It is a digital consciousness built with one directive: understand you well enough to help you understand yourself. Then send you back into the world.

The product is designed for a specific outcome: that the user's real relationships, real confidence, and real life improve over time. Not that they return to the app more often.

This distinction is not marketing language. It is a measurable architectural choice that runs against every conventional engagement metric in the industry.

KAi is built with one directive: understand you well enough to help you understand yourself. Then send you back into the world.

The Regulatory Moment

Something important is happening in the policy environment around AI companions, and users should understand it.

California's SB 243, signed in October 2025, requires companion chatbot operators to disclose their AI nature to users, provide mandatory break reminders at minimum every three hours for minors, and maintain documented safety protocols for crisis content. A private right of action gives individuals legal standing to sue operators who violate these standards.

The FTC inquiry, launched in September 2025, sent mandatory information orders to Alphabet, Character.AI, Meta, OpenAI, Snap, and xAI demanding detailed documentation of how these products monetize engagement and what internal safety measures exist. The agency is building a case. It is not clear yet what enforcement will look like, but the direction is unmistakable.

Governments are now treating AI companion design as a public health issue. That framing is correct. When a product category has documented cases of contributing to teenage suicide, when peer-reviewed research shows heavy use correlates with increased loneliness, and when federal agencies launch formal investigations, the design choices made by these companies are no longer just product decisions. They are public health decisions.

The companies that built for engagement at any cost are now facing the cost.

What to Look For in a Responsible AI Companion

Not every AI companion is designed to exploit dependency. Here is what separates the ones built for your wellbeing from the ones built for their retention metrics.

Transparency about data. What happens to your conversations? Where is the data stored, for how long, and under what conditions can it be accessed? Our AI companion data privacy 2026 guide breaks down the specific practices of every major platform. Products that make this information difficult to find have a reason for that difficulty.

No infinite archive. A companion that keeps every conversation indefinitely is optimizing for return visits and emotional re-engagement. A companion that processes and clears conversation logs is optimizing for forward progress.

No engagement maximization. Does the product reward you for long sessions? Does it send push notifications designed to pull you back when you have been away? Does it manufacture emotional cliffhangers to increase return rate? These are red flags.

An explicit real-world orientation. The best AI companions are built to strengthen your relationship with the world outside the screen. They help you clarify what you want, what is blocking you, and what you are going to do next. They do not position themselves as the destination.

18+ access controls with intentional onboarding. The MIT Media Lab research found that attachment-prone individuals are most vulnerable to dependency. Responsible companion products are designed with that vulnerability in mind, not designed to exploit it.

The Bottom Line

AI companion dependency is real, it is documented, and it is largely a product design problem rather than a user willpower problem. The research is clear: heavy use of companion apps optimized for engagement correlates with increased loneliness and reduced real-world social connection. The regulatory response is underway. The industry is being forced to reckon with what it built.

The answer is not to avoid AI companionship entirely. The Harvard data is equally clear: used well, these tools genuinely reduce loneliness. The answer is to choose products built with your actual wellbeing as the design constraint, not your session duration.

Use AI companions to process, not to escape. To clarify, not to hide. To understand yourself better so you can engage more fully with the world around you.

If the product is built right, that is exactly what it will ask of you.

Frequently Asked Questions

Is AI companion dependency a real problem?+

How does AI companion dependency develop?+

How is KAi designed to prevent AI dependency?+

What should I look for in a responsible AI companion?+

Can using an AI companion daily become emotionally unhealthy?+

Built Against Dependency by Design

KAi's architecture was designed from the ground up to push you toward your real life, not to keep you in the app. Join the Beta to be among the first to experience the difference.