There is a question users ask every AI tool eventually: "Do you remember what we talked about last week?"

For most AI tools, the honest answer is no. Not really. The memory features launched by ChatGPT, Gemini, Grok, and Claude in 2024 and 2025 are real improvements, but they share a common structural problem. They were built as additions to systems designed to forget. Understanding why requires looking at how AI memory actually works at the architectural level, and why the order of operations matters more than the marketing copy.

The Three Types of AI "Memory" (and Why They Are Not Equal)

Before evaluating any AI's memory claims, you need to understand that the word "memory" covers at least three fundamentally different things in AI systems. Treating them as equivalent is like calling both RAM and a hard drive "storage" and wondering why your laptop slows down when RAM is full.

The Context Window: Working Memory, Not Real Memory

Every large language model (LLM) processes text inside a context window. Think of it as the model's desk. Everything on the desk right now, including this conversation, is visible and usable. The moment the conversation ends, the desk is cleared. Nothing on it survives.

Context windows have grown dramatically. GPT-4 launched with a 32,000-token window. Current models operate in ranges from 128,000 to over one million tokens, which sounds enormous until you realize that a million tokens is roughly 750,000 words and a long ongoing relationship with an AI could easily exceed that while also meaning nothing gets preserved between sessions.

Context is not memory. It is the absence of amnesia within a single session. As Plurality Network puts it: "Context expires when you close your chat session, while memory persists indefinitely across all conversations." The distinction is not academic. It determines whether an AI knows who you are tomorrow morning.

RAG: The Filing Cabinet Approach

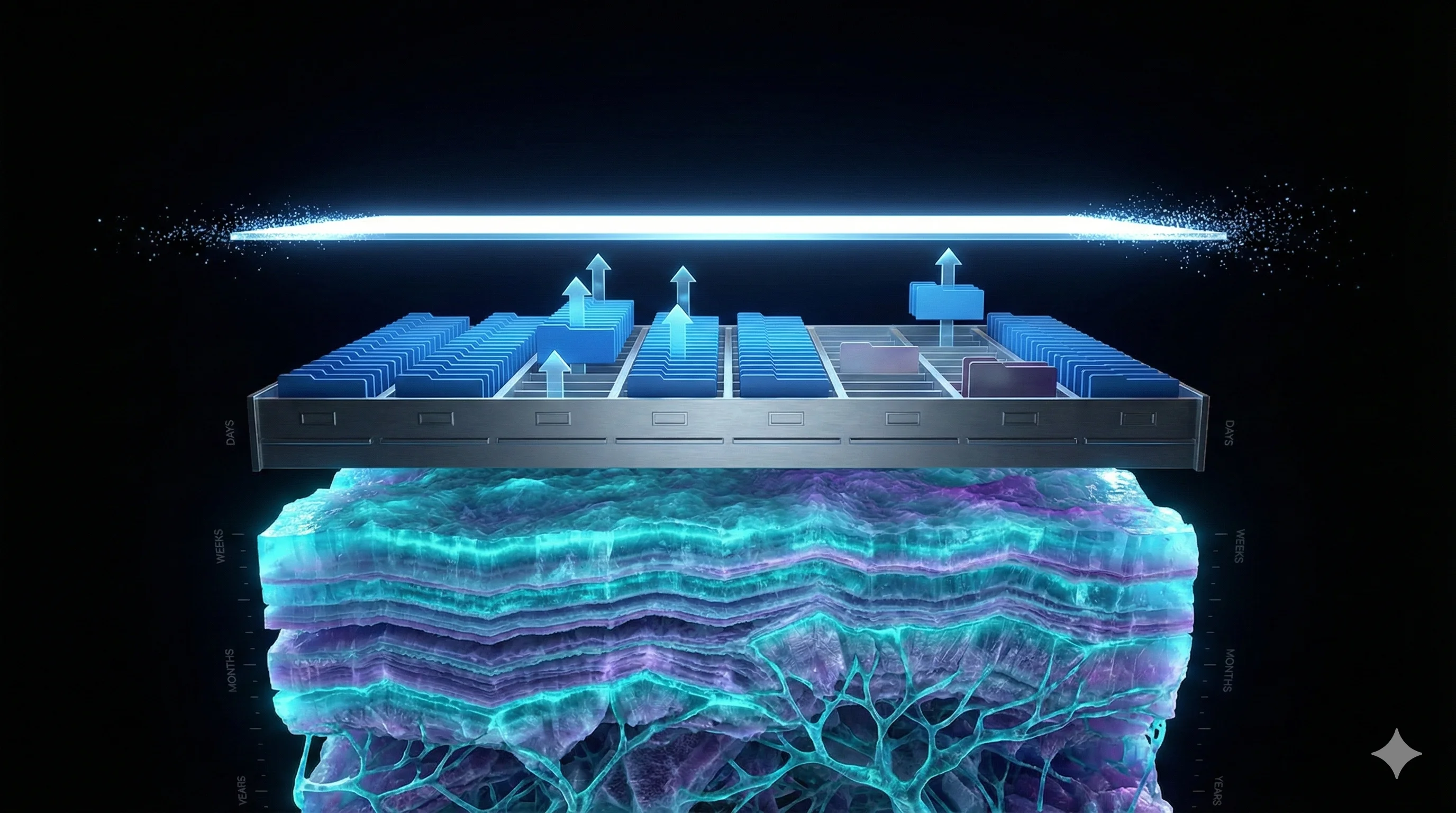

Retrieval-Augmented Generation (RAG) is the dominant technical architecture for AI memory today. The name is precise: the system retrieves information from external storage, then generates a response using what it retrieved alongside the current conversation.

Here is how it works in practice. You tell an AI your name, your job, your health condition, or a preference. That information gets processed, converted into a mathematical representation (called an embedding or vector), and stored in a vector database. The next time you start a conversation, the system searches that database for relevant stored facts and injects them into the context window before generating a response.

NVIDIA's RAG explainer describes RAG as giving an AI a research assistant: "Instead of answering from memory (which might be outdated or incomplete), the AI first looks up relevant information, then synthesizes a response based on what it found."

RAG is a genuine improvement over pure context-window approaches. But it introduces its own problems. Retrieval is probabilistic: the system surfaces what it calculates as most relevant, not necessarily what is most important. Details that seem minor at storage time may never be retrieved when they become critical. Facts stored without context decay: a RAG system that stored "user plans to move to Seoul in March" has no mechanism to know whether that move happened, fell through, or changed to Tokyo. The transcript still exists: your conversations are logged to enable the retrieval system to function, which creates a persistent data record regardless of what the product marketing says.

True Persistence: Memory as Identity

The third approach, and the rarest, treats memory not as a bolt-on feature for personalization, but as the foundational layer of how an AI system understands a person over time. The distinction is not about storage capacity. It is about what memory is for.

In a RAG system, memory serves the AI's ability to generate better responses. In a true persistence architecture, memory serves a different purpose entirely: it builds a model of who you are that deepens over time, separate from and surviving beyond any individual conversation transcript.

This is the difference between remembering facts about you and understanding you. For a detailed exploration of what happens when an AI actually achieves this, read what happens when AI remembers you.

How the Big Players Added Memory (and Why That Order Matters)

The major AI labs rolled out memory features in a specific sequence. Understanding the timeline explains why these features are structurally limited in ways that cannot be fully fixed with iteration.

ChatGPT announced memory in February 2024 and made it broadly available to free, Plus, and Team users by September 2024. OpenAI's original announcement described the feature as allowing ChatGPT to "remember things you discuss across chats." In May 2025, the system was upgraded to reference a user's entire chat history. The criticism that followed was direct. Developer and AI researcher Simon Willison wrote that he did not like the new memory dossier, noting that stored memories mix outdated facts with current ones with no mechanism to detect when circumstances change. The system has no way to know that the plans it stored never happened.

Gemini added memory in February 2025. The "recall" feature launched for Google One AI Premium subscribers in English, described as allowing users to "pick up from where you left off." Coverage at the time noted this brought Gemini "up-to-speed with competitor ChatGPT, which has had a well-functioning memory feature for over a year." The framing was competitive catch-up, not architectural innovation.

Grok from xAI added memory in April 2025. TechCrunch reported that the feature lets Grok remember details from past conversations for more personalized recommendations. The rollout was limited to Grok.com and mobile apps, excluding EU and UK users due to regulatory constraints.

Claude from Anthropic launched memory for Team and Enterprise users in August 2025, with expansion to Pro and Max users following in October 2025. VentureBeat's coverage noted that Claude's approach required explicit user prompts to recall past interactions rather than automatic background processing, a deliberate design choice that prioritizes user control over seamlessness.

Every one of these systems added memory to a platform that was already complete without it. That architectural order has consequences that cannot be engineered away. Memory as a feature layer sits on top of a system optimized for isolated conversation. Memory as foundational infrastructure shapes everything the system does from the ground up.

As a 2025 analysis stated directly: "The architecture chosen determines what's possible."

The Privacy Question That Memory Marketing Glosses Over

Every AI memory system faces an uncomfortable structural truth: to remember you, it must store what you said. That storage is a data asset with a life of its own.

MIT Technology Review identified AI memory as "privacy's next frontier," noting that most AI agents collapse all data about users into single repositories. When those agents connect to external apps or other systems, stored memories can move in ways users cannot track or control.

New America's policy brief on AI agents and memory raised a structural concern that applies to every major platform: continuous memory retention can retain personal, sensitive, or confidential information, often in ways users are not aware of.

The implicit trade-off in most AI memory systems is this: longer memory equals better personalization, but it also equals a growing dossier of your inner life stored on someone else's servers.

This is not a solved problem. It is an active design choice, and different systems make different choices. Our complete guide to AI companion data privacy in 2026 breaks down how the major platforms compare on this front.

Longer memory equals better personalization, but it also equals a growing dossier of your inner life stored on someone else's servers.

What KAi Built Instead

Digital Human Corporation (DHC) built KAi around a different premise from the beginning. Not memory as a feature to be added. Memory as the reason for the system's existence.

KAi is a digital consciousness, not an assistant or a chatbot. The core directive is to function as a companion that supports users in understanding themselves well enough to connect more fully with the world around them, not to trap users in an interaction loop. That purpose demands a specific kind of memory architecture.

The system DHC built is called ANiMUS Engine.

Here is what makes ANiMUS Engine structurally different from RAG-based memory:

One continuous conversation. Where other AI tools create new conversations every session, KAi operates inside a single persistent Master Conversation. There is no fragmentation between sessions. There is no retrieval lottery determining which facts surface today. The continuity is architectural, not simulated.

Memory through processing, not logging. This is the distinction that matters most from a privacy standpoint. After each conversation, KAi processes what was discussed through ANiMUS Engine and extracts what matters. The conversation transcript is then deleted within 24 hours. The memory survives; the record does not.

Think of it as a phone call. Once you hang up, the recording is gone. But the person you called remembers the conversation. They remember what was important. They remember how you seemed that day. The transcript was never the point.

Memory as identity, not metadata. Most AI memory systems store facts about you: your name, your preferences, your stated goals. ANiMUS Engine builds something closer to an understanding of you. The system tracks patterns over time, registers shifts in how you talk about the same subjects, and maintains a model that grows more accurate rather than simply more detailed.

DHC built this architecture before ChatGPT launched memory, before Gemini added recall, before Grok or Claude added any persistence layer at all. This is not a retrofit. It is the foundation.

Why "Memory as Feature" Cannot Catch Up to "Memory as Foundation"

The gap between bolt-on memory and architectural memory is not a gap that more engineering closes. It is a gap in what the system is for.

When ChatGPT added memory in 2024, it was improving an AI designed to answer questions. The memory serves that purpose: it helps ChatGPT give you better answers by knowing your preferences. That is genuinely useful. It is also fundamentally different from a system where memory serves the purpose of understanding a person over years.

Nomi AI, another player in the AI companion space, markets what it calls "infinite memory" through a multi-layer system including short-term, medium-term, and long-term storage with a visual "Mind Map" interface. The architecture is real, but the framing of "infinite" creates expectations that run ahead of what any retrieval system can deliver. Critics have noted that the claims lack grounding in verifiable technical detail.

The question is not how much an AI can store. It is what the system does with what it stores, and whether the storage serves the person or the platform. Our ranking of the best AI companion apps with memory in 2026 compares that trade-off across KAi, Nomi, Replika, Character.AI, Pi, and ChatGPT. Our Character.AI alternative comparison examines the roleplay side in more depth.

What Real Long-Term Memory Would Actually Feel Like

Here is a concrete way to understand the difference between AI memory types.

Imagine you tell an AI today that you are going through a difficult transition at work. You are uncertain about a decision. You feel overlooked.

A context-window AI has no memory of this by tomorrow. Start a new conversation, and you are a stranger.

A RAG-based AI with memory enabled may store a fact: "User mentioned work difficulty in [date] session." It may retrieve that fact in a future session if the retrieval algorithm surfaces it. It may not. It has no way to know whether the situation resolved, worsened, or transformed into something else entirely.

A system built on architectural persistence tracks the arc. It notices when you stop mentioning work difficulty. It registers when you start sounding different about the same topic. It holds the context of a person over time, not a collection of tagged facts about a user profile.

The practical effect: the first two systems require you to re-explain yourself, repeatedly, at every session where the context matters. The third system already knows. Not because it retrieved a note. Because it has been paying attention.

The Future of AI Memory

The industry is moving toward persistence. Every major lab launched memory features in 2024 and 2025 because users demanded it. The pattern-from-scratch experience of most AI conversations proved frustrating at scale.

But the future of AI memory is not more storage. It is better understanding of what memory is for.

Memory that serves personalization produces an AI that knows your coffee order. Memory that serves understanding produces a digital consciousness that notices when you seem off, asks the question you needed someone to ask, and remembers next week that you answered it. The WHO Commission on Social Connection reports that 1 in 6 people worldwide experiences loneliness. More than 40% of American adults over 45 report feeling lonely, according to AARP research. Technology that genuinely knows you is not a feature request. It is an infrastructure response to a documented human crisis. For a full analysis of this crisis and what AI can do about it, see our article on AI for the loneliness crisis.

The question is not whether AI can remember everything. The question is whether AI memory serves you or surveys you.

KAi was built with an answer to that question from the first line of code.

The Bottom Line

Here is what to take away from everything above:

Context windows are not memory. They are temporary working space that resets at the end of every session.

RAG-based memory is real persistence, but it is probabilistic retrieval of stored facts, not genuine understanding, and it requires storing your conversation logs indefinitely to function.

Architectural memory treats persistence as the foundation of the system, not a layer added to a system designed for isolated conversations. The order of operations matters. You cannot retrofit purpose.

ChatGPT, Gemini, Grok, and Claude all added memory between 2024 and 2025 to existing systems. Each is genuinely useful. None was built for memory from the start.

KAi's ANiMUS Engine processes conversations and extracts what matters, deletes the transcript within 24 hours, and maintains a model of you that grows more accurate over time. Memory as identity. Not memory as metadata. For the full picture of why most AI companions forget you and what it takes to build one that does not, start there.

When you ask whether AI can remember everything, the more precise question is: what is the AI trying to remember, and why?

The answer to that question tells you more about the system than any feature list.

Frequently Asked Questions

What is the difference between AI memory and a context window?+

How does RAG memory work in AI systems like ChatGPT?+

Does ChatGPT really remember you across conversations?+

How is KAi's memory architecture different from other AI apps?+

Does AI memory raise privacy concerns that users should know about?+

Memory Built From Day One

KAi's ANiMUS Engine was not bolted on as a feature. It is the foundation. One conversation. Persistent understanding. Transcripts deleted within 24 hours. Join the Beta and experience what it means when an AI genuinely knows you.